Part of our brain’s job is determining what’s around us. It does this mainly through the 5 senses: sight, hearing, touch, smell, and taste. However, these senses—especially sight and hearing—often have incomplete information. For example, many objects that we look at are partially blocked from our view. Our brains make up for these deficits using our prior knowledge and expectations to fill in the gaps. This process is called sensory inference.

We use sensory inference so often that we barely notice. Take a coffee table—without sensory inference, you would fail to recognize the table as soon as you set down your drink! Despite how common sensory inference is, scientists don’t know how our brains do it. Recently, a team of researchers at the University of California, Berkeley, set out to understand the brain-level processes underlying sensory inference in mice.

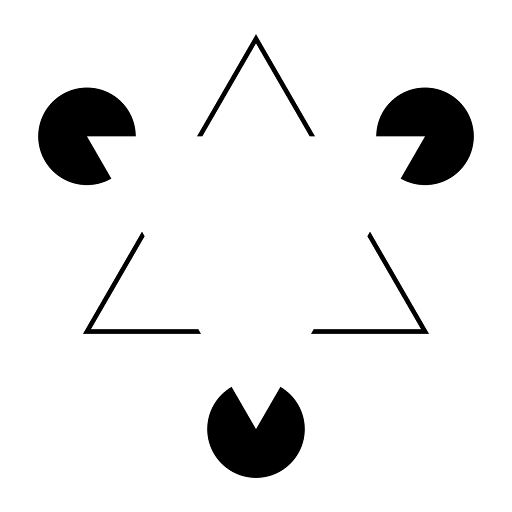

Previous researchers found that mice, like humans, are susceptible to the Kanisza illusion, pictured below. This illusion exploits sensory inference. Most people perceive an upside-down triangle, even though the image only shows 3 incomplete circles and a few angles. Researchers have demonstrated that similar illusions trigger sensory inference in mice. To continue this line of work, the team at Berkeley used mice’s brains to help understand how human brains carry out sensory inference.

“Kanizsa triangle” by Fibonacci is licensed under CC BY-SA 3.0. Most people looking at this image see a white triangle in the middle, rather than just three incomplete circles and three angles. This is because of sensory inference.

The researchers used 2 methods to detect brain activity in mice. First, they surgically inserted sensors, called Neuropixels, into the heads of 14 mice, which allowed them to monitor many neurons simultaneously. In the second method, referred to as two-photon imaging, they examined the brains of 4 mice using a special microscope that can see the activity of individual neurons.

They explained that these 2 methods have complementary strengths and weaknesses. Neuropixels provide a broad view of brain activity, while two-photon imaging focuses on single neurons or small groups of neurons, called circuits. The researchers conducted each experiment on 2 groups of mice–they studied one group with Neuropixels and the other with two-photon imaging.

To figure out how sensory inference works, the researchers first determined which neurons were responsible for the mice perceiving a white triangle in the Kanisza illusion. They recorded the brain activity of each group of mice while showing them 2 kinds of images—some were illusions, like the example, and others contained real shapes. They found that a region towards the back of their brains that processes low-level visual information, called V1, showed similar activity in response to the illusions as it did in response to the real shapes.

The researchers found that 2 types of neurons in the V1 region contributed to sensory inference. The first type consisted of neurons that only responded when shown an illusion. That is, they responded specifically to shapes that weren’t there. The researchers called these neurons illusory shape encoders. The second type showed similar activity regardless of whether there was an illusion, seemingly reacting to the individual shapes in the image. The researchers called these neurons segment responders.

The team compared both types of neurons using machine learning algorithms. They found that illusory shape encoders, seemingly responsible for the illusion, were more connected to brain regions linked to highly advanced visual processing outside of V1. This suggested that illusory shape encoders and similar neurons help the brain use expectations to fill in information gaps. However, they still weren’t sure how these neurons accomplished this goal.

The researchers hypothesized that partial visual information triggers the illusory shape encoders, which then activate other neurons in V1, making it seem as if the illusory shape were really there. To test this hypothesis, they used lasers to stimulate the illusory shape encoders in mice that weren’t looking at anything. The illusory shape encoders then activated neurons throughout V1, causing the mice to experience the sensation of seeing a real shape.

They concluded that 3 consecutive circuits help create sensory inference illusions in mice. First, segment responders detect individual shapes and send signals to higher-level processing regions of the brain, which determine what information is missing and how to fill in the gaps. Then, these higher processing regions activate illusory shape encoders. Finally, the illusory shape encoders complete the pattern, activating the rest of V1 and creating the sensation of seeing an actual shape.

Although this research team focused on illusions, they argued that their findings could apply to sensory inference in general. As the scientific understanding of brain mechanisms like sensory inference expands, future researchers could be able to generalize their results to other functions of the human brain, such as memory and language processing.